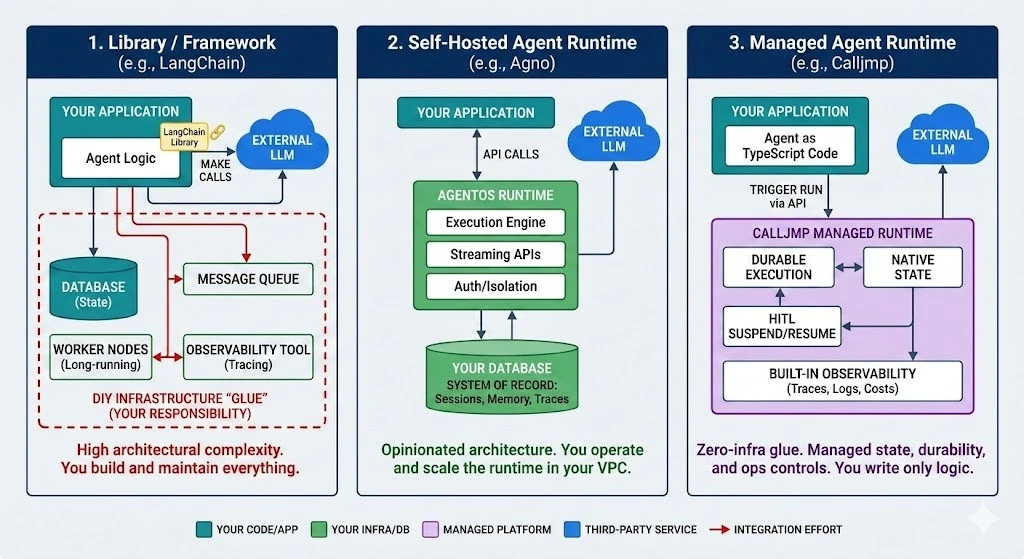

AI agents as code have moved from terminal demos to production systems — and the infrastructure gap is now the bottleneck, not model intelligence. Teams shipping AI features into real products need durable state, long-running execution, human-in-the-loop approvals, and observability. Three platforms represent three fundamentally different ways to solve this: LangChain (open-source library), Agno (self-hosted runtime), and Calljmp (managed agentic backend).

This guide breaks down how each platform handles the six areas that matter most in production, so you can match the right architecture to your team.

What Each Platform Actually Is

These three are not interchangeable tools. They represent different architectural philosophies, and understanding that distinction matters more than any feature checklist.

LangChain is an open-source library for composing LLM-powered applications. Available in Python and JavaScript, it gives you building blocks — prompts, tool connectors, RAG pipelines, agent routing — that you embed into your existing backend. LangChain handles the intelligence layer. Everything else — queues, state databases, scaling, monitoring — is your responsibility.

Agno is a self-hosted agentic architecture. It pairs an SDK for defining agents, teams, and workflows (primarily Python) with AgentOS, a production runtime that handles streaming, authentication, request isolation, and approval enforcement. You get an opinionated blueprint for how agent services should run, but you deploy and operate it inside your own infrastructure.

Calljmp is a managed agentic backend. You write AI agents as code, deploy them to a hosted runtime, and Calljmp handles execution, state persistence, retries, timeouts, and observability. It sits alongside your existing app backend — your product logic stays where it is, while Calljmp manages the agent lifecycle.

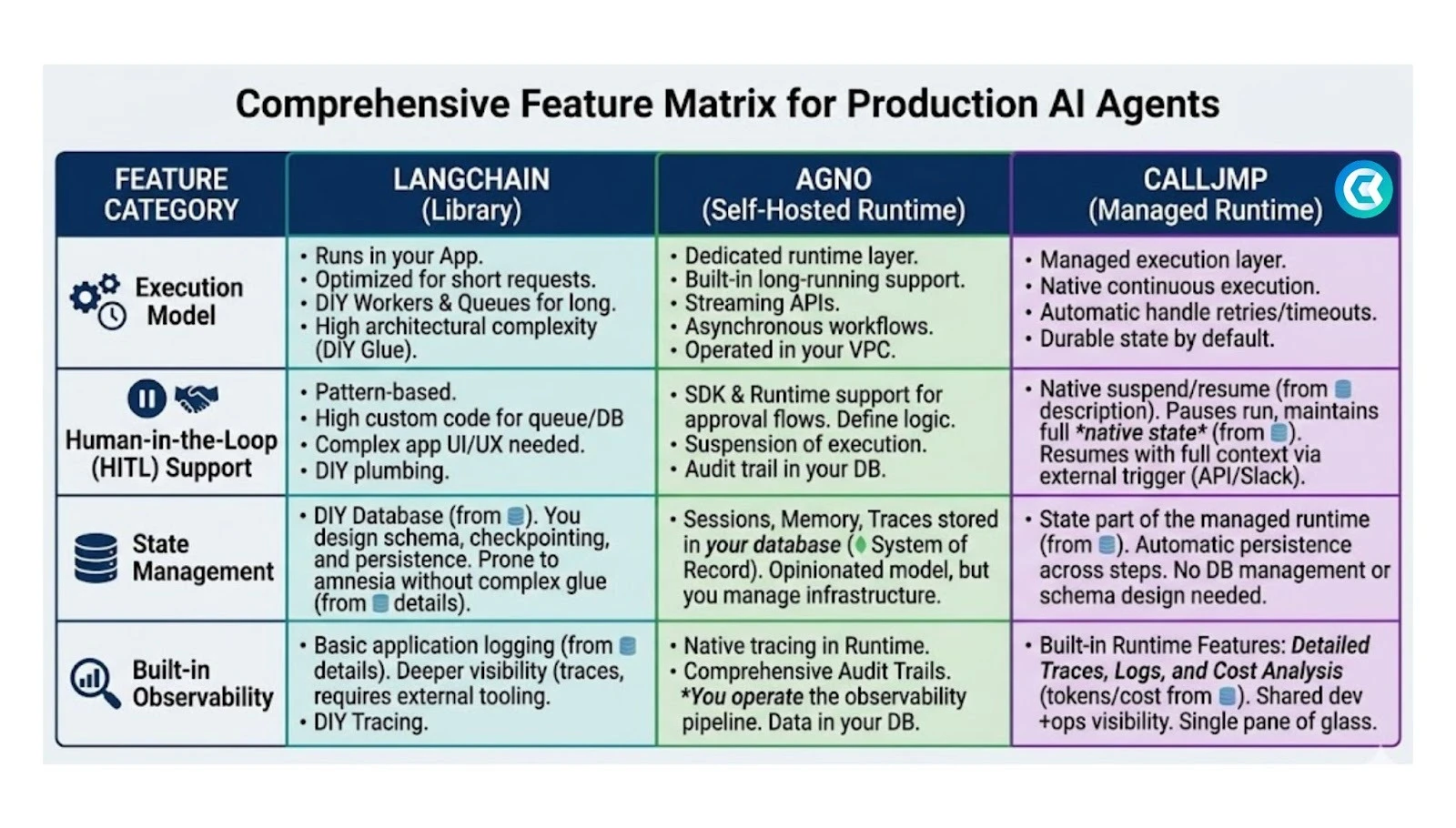

1. Execution Model

How an agent runs determines whether it survives real-world conditions.

LangChain executes within your application’s lifecycle. It works well for synchronous request/response flows — a user asks a question, the agent processes it, returns an answer. When workflows stretch to minutes or hours (researching a topic, drafting a report, processing a batch), you need to decouple agent logic into background workers, build queue systems, and handle timeouts yourself.

Agno runs as a dedicated infrastructure layer via AgentOS. It handles background execution and request isolation natively, and exposes streaming APIs for asynchronous tasks. This is a meaningful step up from raw LangChain, but reliability depends on how well your team manages the servers running AgentOS.

Calljmp provides managed execution where every run has a distinct identity and guaranteed lifecycle. Trigger a run via API, and the platform orchestrates it across steps, persists state at every stage, and handles retries and backoff automatically. Long-running processes are fully abstracted from your engineering team.

Bottom line: LangChain requires you to build execution infrastructure. Agno gives you the architecture but you operate it. Calljmp operates it for you.

2. State Management

Agents that forget what they were doing mid-workflow are useless in production. State management — remembering conversation context, tool call results, and position within a plan — is the hardest infrastructure problem in agentic systems.

LangChain takes a bring-your-own-database approach. The framework provides checkpoint concepts (especially in LangGraph), but you provision, secure, and maintain the storage. You write the serialization logic. You own the failure modes.

Agno models sessions, memory, and traces explicitly in its SDK and AgentOS. The framework handles reads and writes, but stores everything in your database. This is a strong fit for teams with data residency requirements — state never leaves your network.

Calljmp treats durable state as a native platform primitive. As your agent progresses through its logic, the runtime persists context automatically. A workflow that waits days for an external webhook simply suspends, offloads from memory, and resumes later with full context intact.

Bottom line: LangChain gives you maximum control and maximum work. Agno gives you structured state with self-hosted ownership. Calljmp makes state invisible — it just works.

3. Human-in-the-Loop Approvals

Production agents must not execute destructive actions — deleting records, sending mass emails, issuing refunds — without human oversight.

LangChain requires you to engineer the entire approval flow: pause the agent, persist its state, send a notification, expose an approval endpoint, then reconstruct the agent’s context to resume. This is a significant custom build.

Agno includes approval flows in its SDK and enforcement at the AgentOS level. The engine understands pausing for permission. You still build the UI integration and operate the system, but the architectural patterns are defined.

Calljmp offers native suspend-and-resume for HITL. A running workflow pauses itself, emits an event for human input (via UI, Slack, or API), and continues from the exact same point with full context. The platform handles state suspension and resumption — what would be a distributed systems challenge becomes a few lines of code.

Bottom line: HITL is a major custom build in LangChain, a structured pattern in Agno, and a built-in primitive in Calljmp.

4. Observability

AI agents are non-deterministic. When they make mistakes, you need to reconstruct exactly what happened — what prompt was sent, which tools were called, what data came back, and what it cost.

LangChain users typically add LangSmith or similar third-party tracing tools. This works, but adds another service to configure, pay for, and maintain.

Agno includes native tracing and audit logging in AgentOS. All trace data lives in your database, giving you full control over telemetry and the ability to build internal dashboards.

Calljmp captures traces, logs, and token costs automatically as part of the execution fabric. Because it manages the runtime, every step of every run is instrumented by default. Teams get a unified dashboard for investigating runs, comparing behaviors, tracking regressions, and monitoring costs — no third-party tracing setup required.

Bottom line: LangChain requires external tooling. Agno gives you self-hosted traces. Calljmp includes observability as a native layer.

5. Developer Experience and Language

LangChain supports Python and JavaScript/TypeScript with a massive ecosystem of integrations. Maximum flexibility, maximum community resources, but also maximum assembly required.

Agno is primarily Python-focused. The SDK and AgentOS are designed as a cohesive Python-native stack. Strong for ML-heavy teams, but limits adoption for teams working in other languages.

Calljmp is built around TypeScript. Agents are defined as code alongside your application logic, with full type safety. The developer experience is designed for backend and platform engineers who want to write agent logic without managing infrastructure.

Bottom line: Choose the platform that matches your team’s primary language and infrastructure appetite.

6. Total Cost of Ownership

The sticker price of open-source tools is misleading. The real cost includes cloud compute for workers, managed databases for state, observability platform subscriptions, and — most significantly — the engineering hours spent building and maintaining infrastructure glue.

LangChain is free to use. The TCO comes from the surrounding infrastructure you build and operate: queues, state stores, monitoring tools, and the engineering time to keep it all running.

Agno is also free at the core. The TCO includes operating AgentOS within your infrastructure, maintaining database schemas for state and traces, and the DevOps overhead of keeping the runtime highly available.

Calljmp charges for the managed runtime directly. The TCO trade-off is that it eliminates custom infrastructure — state, execution, HITL, retries, and observability are bundled. Teams ship production agents faster and redirect engineering time from infrastructure to product work.

Quick Comparison

| Capability | LangChain | Agno | Calljmp |

|---|---|---|---|

| Architecture | Library you embed | Self-hosted runtime | Managed agentic backend |

| Primary language | Python + JS/TS | Python | TypeScript |

| Execution model | Tied to your app lifecycle | Dedicated infra layer (you operate) | Managed with guaranteed lifecycle |

| State management | Bring your own database | Your database, structured SDK | Native platform primitive |

| HITL approvals | Custom engineering required | SDK approval flows | Native suspend/resume |

| Observability | Third-party tools (LangSmith) | Self-hosted traces in your DB | Built-in traces, logs, costs |

| Long-running workflows | You build the queue system | AgentOS handles background execution | Managed with automatic retries |

| Infrastructure ownership | Fully yours | Fully yours | Managed by Calljmp |

| Best for | Max flexibility, strong infra team | Python teams, strict data residency | Fast production shipping, agent as code |

Which One Should You Choose?

Choose LangChain if you want maximum flexibility, your team is comfortable building production infrastructure, and you need broad ecosystem support across Python and JavaScript. LangChain is the right fit when you have dedicated backend engineers ready to own queues, state management, and monitoring.

Choose Agno if you are building in Python, want a structured and opinionated architecture, and have security or compliance requirements that mandate self-hosting the agent runtime with all data staying inside your network.

Choose Calljmp if your priority is shipping production AI features quickly. Calljmp is built for teams that want to write agent logic as code and offload execution, state, HITL, and observability to a managed agentic backend — without stitching together infrastructure from scratch.

FAQ

What is an agentic backend? An agentic backend is a managed runtime layer that runs AI agents as persistent, stateful systems rather than single API calls. It handles execution, state persistence, retries, human-in-the-loop approvals, and observability so your app backend stays focused on product logic.

Can I use LangChain with Calljmp or Agno? LangChain is a library that can be embedded within other architectures. You could use LangChain’s prompt composition or RAG utilities inside an agent deployed on Calljmp or Agno, though each platform also provides its own tooling for these capabilities.

Which platform is best for building a SaaS copilot? For embedding an AI copilot inside a SaaS product, the key requirements are durable state, real-time streaming, and safe tool execution with HITL approvals. Agno and Calljmp both address these natively. Calljmp is purpose-built for this use case as a managed agentic backend, while Agno suits teams that need to self-host.

Do I need a separate backend for AI agents? For production use cases involving long-running workflows, stateful execution, and tool orchestration, a dedicated agent runtime significantly reduces complexity compared to bolting agent logic onto your existing app backend. All three platforms address this in different ways — LangChain through custom infrastructure, Agno through self-hosted AgentOS, and Calljmp through a managed service.

What does “agent as code” mean? Agent as code means defining your AI agent’s behavior, tools, memory, and workflow logic directly in a programming language rather than through visual builders or prompt-only configuration. This approach gives you version control, testing, type safety, and the ability to integrate agents deeply with your existing codebase.